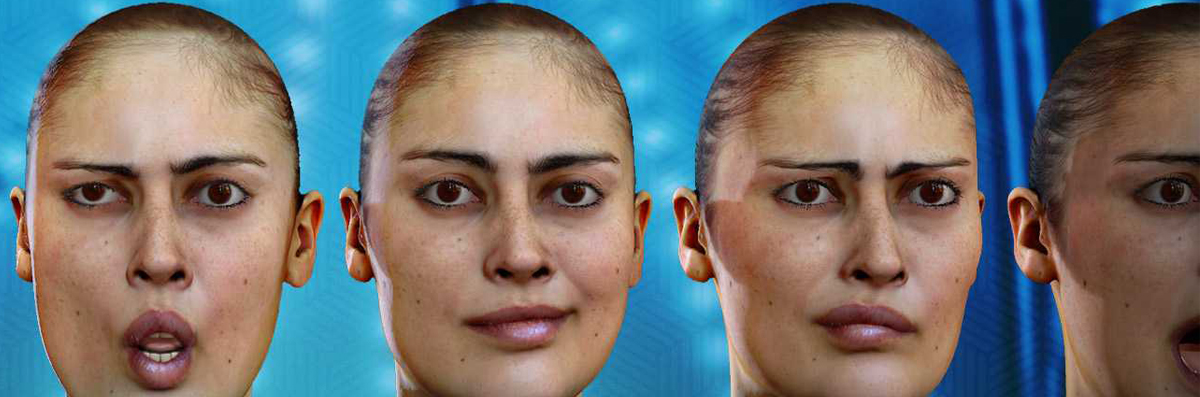

Scan based realistic facial animation : Together with Pixelgun Studio, a 3D high-end scanning agency, we’ve just released a Proof of Concept demonstrating facial tracking trained directly from scans, along with a direct solve on a rig in Maya, delivering high-fidelity raw results.

This technology breakthrough lets you track and solve to the digital double of an actor with a very high level of precision thanks to a process extracting automatically key poses from scanned data and solving them directly onto a rig taking advantage of its intrinsic logic

Scan based realistic Performer – our core markerless tracking software – relies on a specific tracking technology, based on machine learning that requires two essential steps in its workflow:

• Building the Tracking Profile: this first step consists in annotating manually key poses of an actor (from a video source) to give the software examples. This step usually takes up 2 to 3 hours.

• Building the Retargeting Profile: This second step requires that the animator adjusts

controllers in the 3D software to retarget those key poses to the dedicated character. While taking less time than building the tracking profile, building the retargeting profile is also done manually. This step brings a lot of flexibility as the underlying machine learning process makes it possible to retarget to any character and any rig.

Dynamixyz R&D team has always been focused on both reducing time spent on training the system and increasing the quality of the results to save animators some precious time during production.

Scan based realistic : Dealing with massive amounts of scans along the years, it has become obvious those data should be reused to help specializing the tracker to the actor’s morphology.

Scanned data was very hard to collect a few years ago, studios were not equipped and the budgets involved were very high. Since then, scanning technology has become more accessible and affordable. Lots of studios have developed their pipeline including photogrammetry or 3D scan. Companies can now handle the process and deliver clean data efficiently.

Dynamixyz long-time client Visual Concepts introduced Dynamixyz to Pixelgun Studio, which is providing scans for NBA2K and other titles. The two companies joined forces to work on a scan-based facial mocap workflow.

The performance capture with the Stereo HMC was conducted at the same time as the scanning session by Pixelgun Studio.

Pixelgun delivered registered scanned data with textures covering 80 expressions taken with 63 cameras trained on the head for expression capture, and 145 cameras trained on the subject for body capture.

Scanned data gives information on the geometry and textures (like wrinkles or other very peculiar face features) that enables to retrieve very accurate information for both morphology and appearance Scan-based realistic

As lighting conditions are key when training the tracking, manual annotation is still required for 2 to 3 frames extracted from the production shots to retrieve the illumination pattern. The scanned data Dynamixyz team received from PixelGun enabled Dynamixyz to recreate a synthetic double with illumination recovery and extract key poses from this digital double as if it were frames recorded with a head gear.

The tracking was then processed automatically based on geometric and textures information, replacing almost completely the annotation step. “With these images generated as if they were taken with a Head Mounted Camera and geometry information, we were able to build a Performer tracking profile as if it had been annotated manually”, explains Vincent Barrielle, R&D engineer and author of the Scan-Based Solving technology. “It brings higher precision as it has been generated with very high-quality scans. It also gives the opportunity to have a high volume of expressions”.

Tracking from scanned data reduces the error-prone and time-consuming step of manual annotation and is far more accurate since it comes from the specific face information of the actor included in the scans.

Scan based realistic facial animation : Dynamixyz R&D team also pushed forward this proof of concept thinking the true usable outcome was to solve directly on the controllers of the rig rather than solving on the scans.

To this end, the team developed a system that exports and reproduces the compute graph of the rig logic and duplicate all the nodes types into Dynamixyz system to be able to run simulations. This step depends on the rig logic, and thus still need to be tested and approved on a wide variety of rigs.

“We are able to extract the rig knowledge from the Maya scene and use it to build a custom solver that will find out how the rig works from tracking data” explains Vincent Barrielle. “It is demonstrated right now for a use case in which you want to animate a digital double, an exact a clone of the actor based on scans. We can totally imagine to transfer this process onto a completely different rig, another character (human or not) in the future. The real difference here is that our solving is not based on a non-exhaustive set of expressions anymore. It now knows all the possibilities of the rig and is able to navigate through it to find the right settings for a dedicated expression.”

Scan-based realistic facial animation : “This technology is still at the POC level but we aim at making it accessible through a product that is scheduled to ship in 2020” comments Nicolas Stoiber head of R&D and CTO of Dynamixyz. We believe other companies may have already developed such workflows in- house, rather as a tailor-made solution than as a packaged software. This is really at the heart of Dynamixyz’s DNA: we make technologies accessible and usable for the whole industry. When Performer was launched – Nicolas recollects – markerless tracking technology already existed in high-end studios. We just made it available for everyone as we industrialised the tech, offering it as a software: Performer. We are now aiming at developing the same pattern for our scan-based tracker and solver.”

Dynamixyz is currently proposing an Early-Access Program to some key-partners so they can help the company improve the workflow and take advantage of this technology now until the official launch next year.